In a remarkable stride for both the tech industry and the open-source community, Nvidia has unveiled the KAI Scheduler, a groundbreaking component of the Run:ai platform that is now available under the Apache 2.0 license. This initiative not only diversifies Nvidia’s software portfolio but also exemplifies the tech giant’s commitment to the principles of collaboration and community engagement. By making KAI open-source, Nvidia empowers developers across the globe to enhance their AI infrastructure through shared innovation, feedback, and contributions, effectively fostering an ecosystem that thrives on collective intelligence.

Understanding the KAI Scheduler

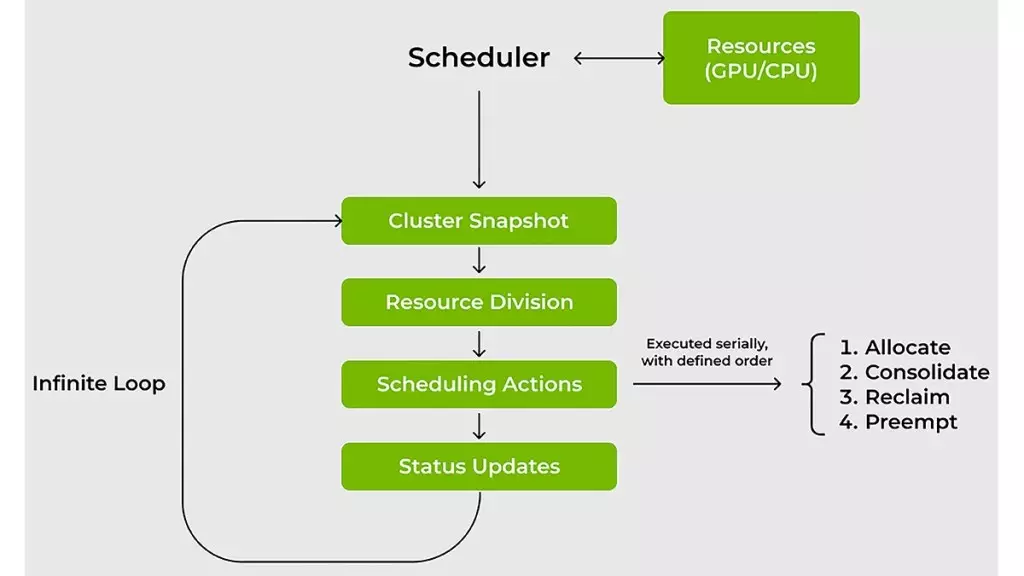

Nvidia’s KAI Scheduler brings a much-needed solution to the persistent challenges of managing AI workloads across GPUs and CPUs. Traditional resource schedulers have proven inadequate in handling the dynamic nature of modern AI demand. Whether it’s a sudden need for a single GPU to explore data or a surge for multiple GPUs for large-scale training, conventional methods falter under such variability. The KAI Scheduler is purpose-built to overcome these obstacles, ensuring not only swift allocation of resources but also guaranteeing fairness and priority alignment amidst fluctuating workloads.

Dynamic Adaptation: The Heart of KAI Scheduler

The scheduler’s prowess lies in its ability to continuously recalibrate itself based on real-time demands. Unlike static schedulers, KAI actively monitors workload changes and adjusts resource allocations accordingly. This capability is crucial for Machine Learning (ML) engineers, who often operate under strict time constraints. Time savings translate to financial efficiency, as reduced wait times allow teams to redirect their focus on productivity and innovation rather than resource management headaches. By employing innovative strategies like gang scheduling, GPU sharing, and hierarchical queuing, KAI empowers users to submit job batches and then pivot to other priorities without compromising on performance.

Strategies to Optimize Resource Utilization

Nvidia has embedded two potent strategies within the KAI Scheduler that drastically enhance resource usage. Approach one—bin-packing and consolidation—focuses on combating resource fragmentation. This method efficiently organizes smaller tasks on GPUs and CPUs, ensuring their full potential is realized. Conversely, the spreading strategy assists in distributing workloads across nodes, effectively diminishing the per-node burden while optimizing resource availability. This dual approach facilitates not just efficient computing but also a more environmentally sustainable use of technology by minimizing underutilization in shared clusters.

A Solution for the Resource Hogging Dilemma

One of the notable challenges in shared computing environments is the tendency for some researchers to hoard GPU allocations, securing more than they immediately require. This not only creates bottlenecks but also stifles productivity for other teams that remain restrained by unused resources. KAI Scheduler tackles this issue head-on. By enforcing resource guarantees, it ensures that all teams receive their fair share of GPUs while dynamically reallocating idle resources to other workloads. This methodical approach not only optimizes cluster efficiency but also cultivates a culture of collaboration within teams.

Simplifying Connections Among AI Tools

Navigating the complexity of connecting AI workloads to various frameworks can often resemble an intricate maze, rife with labor-intensive configurations. KAI Scheduler’s built-in podgrouper eliminates this friction by automatically identifying and integrating a variety of tools such as Kubeflow, Ray, and Argo. This seamless integration drastically simplifies the setup process and accelerates the development cycle, allowing teams to prototype more swiftly and focus on what truly matters—innovation.

The unveiling of Nvidia’s KAI Scheduler marks a pivotal moment in the landscape of AI infrastructure. By championing open-source solutions while addressing critical challenges in workload management, Nvidia not only enhances operational productivity for teams but also contributes to a richer, more collaborative technological environment. As AI continues to evolve, tools like the KAI Scheduler position us to adapt and thrive in an ever-changing landscape.