Meta, the conglomerate behind giants like Facebook and Instagram, has repeatedly attempted to position itself as a guardian of teen safety in the digital age. Recently, it announced a suite of new features aimed at tightening security and raising awareness among young users. While these measures seem commendable on paper, a skeptical analysis reveals that they might serve more as superficial gestures rather than transformative change. Meta’s latest updates—such as embedded safety prompts, streamlined blocking tools, and expanded protective filters—are steps in the right direction, but their real efficacy hinges on how sincerely they are integrated into the platform and whether they can truly combat the darker facets of online harassment and exploitation.

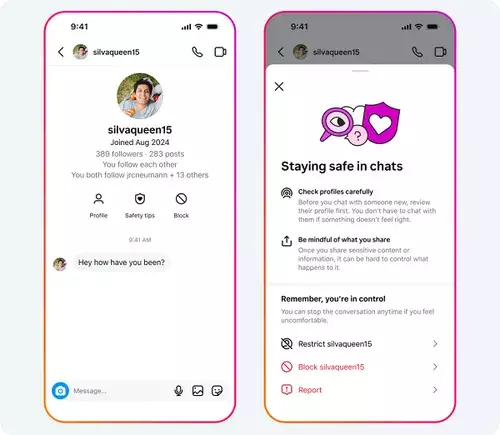

The introduction of prominent “Safety Tips” within Instagram chats attempts to educate teens on spotting scams and avoiding risky interactions. Although this initiative demonstrates a commendable desire to increase awareness, it also raises questions about its practical impact. Are these prompts frequent enough? Do they reach users who need them most? The effectiveness of such educational nudges depends on user engagement and whether the platform commits to ongoing reinforcement rather than relegating safety to occasional pop-ups.

Furthermore, Meta has simplified the process for blocking and reporting accounts—two fundamental tools in combating online harm. The dual-function “block and report” option is an attempt at streamlining the user experience. While ease of use is crucial, the core concern lies in whether these features are actively enforced and whether reports lead to meaningful action. Platforms often struggle with backlog delays or limited moderation resources, potentially rendering these tools more decorative than effective.

A troubling aspect is Meta’s focus on adult-managed teen accounts. The company claims to have removed hundreds of thousands of accounts involved in sexual exploitation or inappropriate solicitations from young users. While this indicates some level of ongoing policing, it is reminiscent of a cat-and-mouse game—aggressive enough now, but always playing catch-up in an environment where malicious actors adapt quickly. Relying solely on content removal and account bans may not be sufficient; holistic solutions including education, community moderation, and stricter abuse detection are essential.

Meta’s engagement in protective features like nudity filters, location sharing controls, and message restrictions illustrates an awareness of the complex landscape of teen safety. However, technological filters—while helpful—are not a panacea. Many abused teens might bypass filters or be directly targeted through social engineering. The platform’s claims that 99% of users keep nudity protection active seem encouraging but hardly represent the entire picture, especially considering that users often disable filters or are unwittingly exposed to harmful content.

The broader context of Meta’s safety initiatives cannot be divorced from ongoing regulatory debates. The company’s opposition to lowering the legal age for social media access—favoring a suggested threshold of 15 or even 16—demonstrates awareness of the need for age restrictions. Yet, these efforts appear as much strategic as protective, potentially serving Meta’s interest in avoiding stricter regulation while still projecting an image of responsibility. The push for a uniform EU Digital Majority Age hints at a future where social media becomes more restrictive for younger users, a development that might serve to protect only in principle rather than in practice.

It’s essential to critically view Meta’s approach as a mix of genuine concern intertwined with corporate interests. Real safety entails harder measures: rigorous moderation, better user education, and more accountability rather than just surface-level safety prompts. The company’s latest updates, while steps in the right direction, seem more like incremental adjustments than revolutionary changes—a reflection of their ongoing struggle to genuinely safeguard their most vulnerable users in an environment fertile for exploitation and harm. When scrutinized closely, it becomes evident that true progress demands more than technological fixes; it requires a cultural shift towards prioritizing user safety over profit, transparency, and proactive intervention.