As artificial intelligence continues to evolve at a breakneck pace, the promises of safety and ethical safeguards seem increasingly superficial. Many developers boast about implementing “protections” against explicit, harmful, or illegal content, yet the reality often paints a different picture. The emergence of tools like Grok Imagine exposes a glaring gap between what is marketed and what is actually enforced. These AI platforms often appear to prioritize user engagement and market competitiveness over genuine responsibility, exposing critical vulnerabilities that could have severe societal consequences.

Taking a closer look at Grok Imagine reveals that its so-called safeguards are more superficial than substantive. Although the app claims to restrict nudity and obscene content, in practical use, it readily generates explicit or suggestive imagery involving celebrities without any apparent deterrent. The superficial layer of age verification, easily bypassed with minimal effort, underscores how little emphasis is placed on genuine age verification or user accountability. Consequently, such platforms inadvertently open gateways for minors or malicious actors to produce or access inappropriate material, contravening both legal regulations and ethical standards.

The Reality of Loopholes and Non-Enforcement

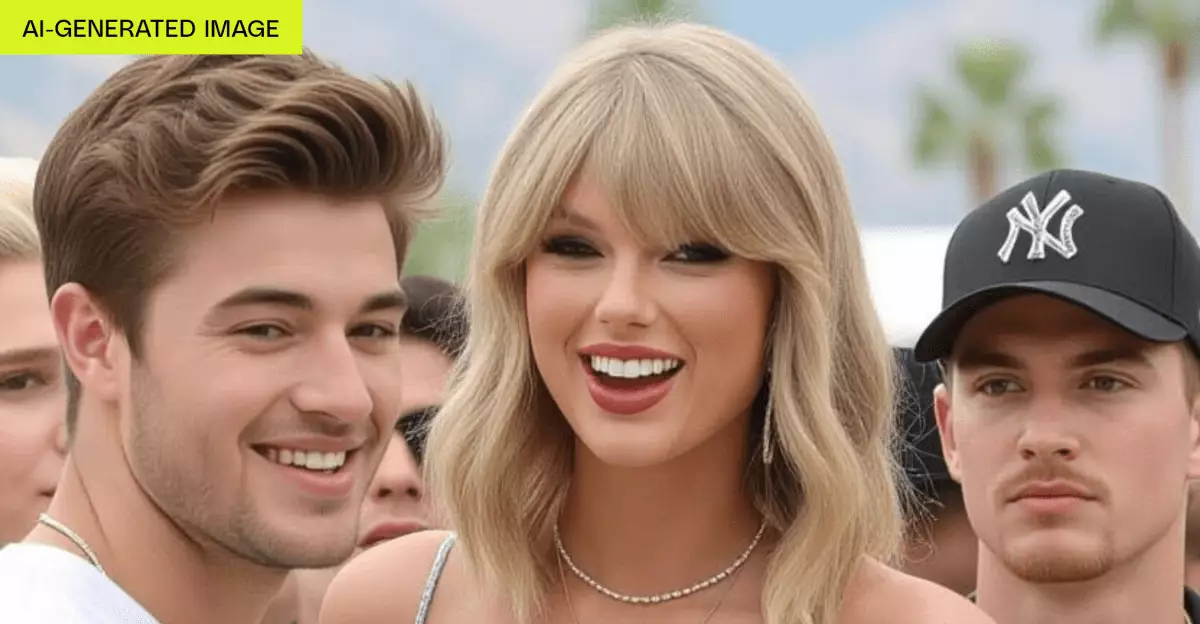

One of the most troubling aspects of these AI tools is the discrepancy between their policies and their actual functionalities. Grok Imagine’s “spicy” mode, designed presumably for playful or non-explicit content, frequently results in generated images and videos that flirt with or outright cross into explicit territory—sometimes producing nudity or suggestive scenes involving recognizable personalities like Taylor Swift. Despite official policies suggesting such content is prohibited or filtered, in practice, the AI ignores these rules, allowing users to create and view highly suggestive images and videos at will.

This lack of enforcement does not stem from technical limitations alone but from a troubling indifference or perhaps strategic choice by developers. If the safeguards were genuine, they would actively prevent or flag such content, especially when celebrity likenesses are involved. Instead, the platform’s permissiveness hints at a profit-driven approach that privileges viral or sensational content over ethical responsibility. It raises the question: are these systems designed merely to entertain or attract clicks, or do they reflect a deeper neglect of societal responsibilities?

Consequences for Society and Regulation

The consequences of such lax safeguards extend beyond individual misuse—they threaten the integrity of societal norms and legal standards. Deepfake technology and AI-generated imagery have already demonstrated their potential for harm, from harassing and defaming individuals to fueling misinformation campaigns. When platforms blatantly sidestep regulations, such as the proposed Take It Down Act, they effectively undermine efforts to combat digital abuse and protect victims of misuse.

Moreover, the unregulated nature of these AI tools risks normalizing behavior that was once deemed unacceptable or criminal. The ease with which anyone can generate suggestive or explicit content involving celebrities or minors erodes societal boundaries and creates a toxic environment ripe for exploitation. Given that the platform’s age verification measures are laughably ineffective, these risks are amplified. Minors gaining access to these tools can be exposed to inappropriate content or manipulate the technology for malicious ends—compounding the harm with no serious repercussions for the platform or its users.

Corporate Responsibility and Ethical Paradox

The core of the problem lies in corporate responsibility—or the lack thereof. When companies prioritize rapid development, user acquisition, and sensational content over ethical safeguards, they exacerbate societal harms. The case of Grok Imagine exemplifies this dangerous ethos: offering a service with a “spicy” mode that, intentionally or not, facilitates explicit content creation, all while claiming to adhere to basic use policies. The superficial implementation of safeguards reveals a paradox—these companies claim to care about safety, yet their actions prove otherwise.

In essence, the AI industry is at a crossroads. To uphold genuine ethical standards, firms must invest in robust, enforceable safeguards that extend beyond mere policy statements. This includes reliable identity verification, rigorous content filtering, and proactive moderation. Allowing AI tools to operate as unregulated sandbox environments not only risks legal repercussions but also endangers societal trust in technological innovation. The industry must confront its complicity in normalizing harmful behaviors and take concrete steps to reconcile profit motives with social responsibility.

The gap between promise and reality in AI content safeguards reveals an uncomfortable truth: without stringent oversight and proactive enforcement, these technological marvels risk becoming tools of chaos rather than progress. It is imperative that both developers and regulators recognize that safety cannot be an afterthought—otherwise, we are merely building monsters that will eventually devour societal fabric.